The last year has changed music forever.

AI-generated songs are no longer a fringe experiment. They are on streaming platforms, in private demos, in beat marketplaces, in remix debates, in copyright arguments, and in artist communities trying to separate what is human-made from what is machine-assisted. That shift is exactly why we have continued investing in our AI Music Detector — not as a gimmick, not as a fear machine, and not as a fake promise of absolute certainty, but as a serious tool designed to help artists, producers, labels, curators, remixers, and rights holders make more informed decisions. However, we admit that current AI music detection tools have limitations and can be unreliable or easily fooled, especially when it comes to reliably distinguishing between AI-generated and human-created tracks.

Today, we are rolling out one of the biggest upgrades we have made to the detector so far.

This update improves how our system reviews audio, strengthens the range of signals it considers, makes our analysis pipeline more resilient under load, improves transparency in the way results are delivered, and adds several important capabilities that help the broader music community handle one of the biggest challenges in the current era: understanding whether a track may have been AI-generated, heavily AI-assisted, or created by a human artist using traditional methods.

We are excited about this release because it is not just about making scores better. It is about making the full experience more useful. There is also a fun and experimental aspect to using AI music detectors, as users explore the ongoing innovation in this area and see how the technology responds to different types of music.

That means faster processing. Better orchestration. More nuanced analysis. Better handling of complex tracks. More stable job execution. Better tracking of what the system is seeing. More reliable queueing. Better support for scale. And most importantly, a stronger overall framework for giving creators and music professionals a meaningful signal when they need one. It’s important to note that AI music detectors often struggle with hybrid audio that combines both AI-generated and human-created elements, making accurate detection even more challenging.

At the same time, we want to be clear: we are not publishing our full internal formula, decision thresholds, weighting system, or proprietary fusion methods. That is intentional. We believe in sharing what has improved and why it matters, while protecting the underlying mechanics that make the detector valuable. Understanding the history of AI music detection and how the technology has evolved is crucial for appreciating both its strengths and its current limitations.

So in this update, we want to walk through what has changed, why it matters, and how these improvements help the people who actually need this tool in the real world. When making informed decisions, it’s essential to recognize the subtle but significant differences between AI-generated and human-created music, and the ongoing challenge of identifying these differences with certainty.

Why this update matters right now

The conversation around AI music is getting louder every month.

Artists are being accused of using AI when they did not. Other tracks are slipping through the cracks because they sound polished enough to appear human-made. Producers are trying to protect original work. Rights holders are reviewing suspicious uploads. Fans are arguing in comments sections. Remixers are getting challenged. Platforms are being asked to take authenticity more seriously. And attorneys, A&Rs, curators, and managers are being pulled into disputes that often begin with one simple question:

Is this song likely AI-generated?

The problem is that this is not a simple question anymore.

One signal alone is not enough. A track can be compressed, mastered, stem-processed, exported multiple times, clipped for social media, or altered by human editing after AI generation. Some AI tracks show obvious artifacts. Others are cleaner. Some human tracks are highly quantized and processed, which can create false assumptions if a detector is simplistic. That is why we have kept moving away from any single-factor idea of detection.

Our latest update pushes even further into a multi-signal, layered review approach, similar in spirit to other comprehensive multi-model frameworks for detecting AI-generated music.

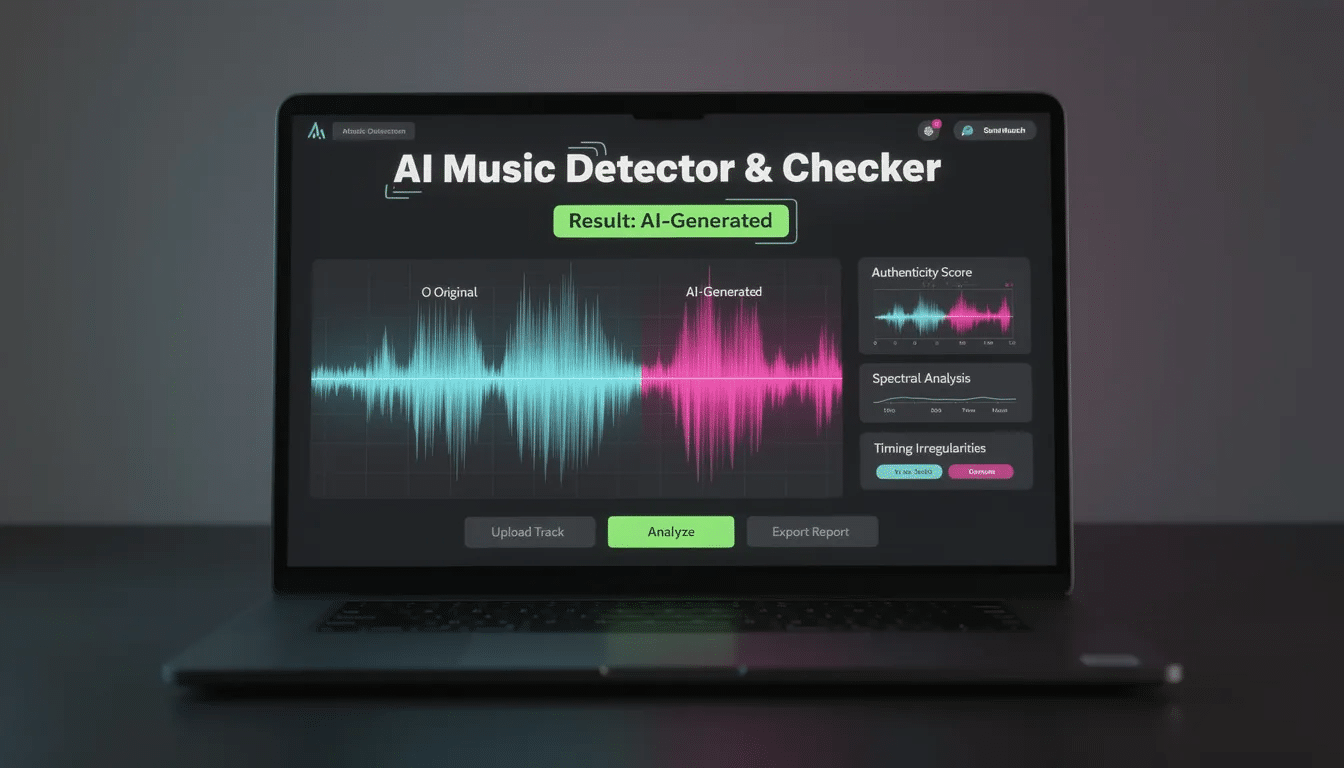

Instead of leaning too heavily on one surface pattern, the system now does a better job of combining multiple analysis perspectives: broad-spectrum signals, rhythmic behavior, vocal characteristics, structural energy consistency, provenance clues, stem-aware inspection, and model-backed audio classification. That combination matters because suspicious audio rarely reveals itself in only one place.

Real-world detection needs context. It needs signal overlap. It needs a smarter way to distinguish between “unusual,” “heavily processed,” and “likely synthetic.” In practice, experienced listeners often listen closely and can hear subtle cues across the frequency spectrum that help them distinguish AI-generated music from human-created tracks.

That is what this upgrade is built to do.

A stronger multi-signal detection engine

The biggest shift in this release is the move to a more mature multi-signal AI music detection architecture.

Our previous version already looked at several important traits inside the audio. It examined patterns in the signal, used stem-aware analysis, and fused multiple forensic indicators into a final output. That foundation worked, but we knew it could go much further.

The new version expands that foundation significantly.

Without exposing the private recipe, here is what has changed at a high level:

- We now combine more detection perspectives than before.

- We use a broader mix of audio-forensic, structural, stem-aware, and model-backed signals.

- We improved the way those signals are fused into a final result.

- We strengthened the system’s ability to handle ambiguous cases more carefully.

- We added new checks that help the detector reason about both audio artifacts and provenance clues.

- We improved how the engine reacts when multiple suspicious indicators appear together.

That last point is especially important.

In the real world, suspicious tracks often exhibit a pattern of alignment across several dimensions. It is not just one strange texture. It might be a combination of timing regularity, spectral behavior, vocal oddities, suspicious coherence, metadata evidence, and structural consistency that together paint a clearer picture than any single clue could on its own.

This update gives our engine a better way to detect that kind of overlap, especially when combined with advances in AI audio stem splitting techniques and modern AI stem splitter and vocal remover tools that expose artifacts inside individual layers of a mix.

New provenance-aware checks

One of the most important additions in this release is better support for provenance-aware analysis.

In plain English, that means the detector is now more capable of checking whether a file carries machine-readable evidence that points toward AI generation or synthetic content workflows. Provenance is becoming a bigger part of the media ecosystem, and it matters because not every clue has to live purely in the sound itself.

If a file includes trusted metadata or content credentials that strongly suggest AI-generated origin, that can be incredibly useful context. It does not replace audio analysis, but it can strengthen confidence when present.

Platforms like YouTube and Deezer use AI to detect and flag fully AI-generated songs, helping manage content and shape algorithmic recommendations. AI tools can also automatically analyze and tag songs with detailed metadata, making music discovery and search functions more efficient.

This addition helps our platform better support a future where content credentials and authenticity layers become more important across music, video, and digital media workflows.

For artists and industry professionals, that means the detector is not just listening to the song. It is also getting better at reviewing the broader authenticity picture.

A new macro-level view of the song

Another major improvement is that the detector no longer depends only on one narrow listening perspective.

In older approaches, many detectors — across the industry, not just ours — focus on a short excerpt and hope the most suspicious section happens to be there. That can work sometimes, but it also misses context. Some artifacts are spread across the full song. Some suspicious traits only show up strongly in certain sections. Some tracks have one highly revealing section and several cleaner ones.

Our updated engine now does a better job of balancing whole-track context with high-value excerpt analysis.

That matters a lot.

A full-song perspective helps the system detect broader structural and energy patterns that can be revealing over time, while a targeted high-energy slice helps focus deeper analysis on the section most likely to expose synthetic artifacts, unusual behavior, or unnatural consistency. This dual-view approach makes the detector more practical for modern music, where arrangement, vocal density, beat drops, and layered sections can vary dramatically across a track. The widespread use of DAWs like Logic Pro in both AI and human music production further complicates detection, as these tools enable sophisticated arrangements and processing that can blur the line between AI-generated and human-created content—especially when paired with AI systems that are revolutionizing audio mastering workflows.

For the user, the result is simple: the detector is making its decision from a stronger listening position.

Better stem-aware analysis

We have also doubled down on something we believe is essential for serious AI music detection: stem-aware analysis.

Music is layered. A full mix can hide things. Suspicious patterns are sometimes buried beneath drums, mastering, reverbs, widening, bass energy, and other production choices. Various effects and processing stuff can make it challenging for AI music detectors to distinguish between AI-generated and human-created music, especially since these tools can be manipulated or may give false positives—a challenge that also appears in free and freemium AI stem splitters and vocal removers such as a browser-based free AI vocal remover and stem splitter. If you only inspect the two-channel final output at face value, you can miss what is happening inside the vocal layer, the rhythmic layer, or the broader arrangement.

That is why our detector continues to use source separation as part of its review workflow. In this update, stem-aware analysis is better integrated into the broader system, building on core music stem concepts and workflows.

At a high level, that means the detector can more intelligently isolate and inspect different components of a track when building its final assessment. This helps with areas like:

- vocal behavior

- drum timing and groove relationships

- structural separation between key musical elements

- artifact exposure that may be masked in the full mix

This is especially useful in today’s environment because many debated tracks are not raw exports. They are polished, edited, mastered, and sometimes partially reworked by humans after AI generation. A stem-aware system gives us a better shot at spotting the fingerprints that survive those post-processing steps.

Again, we are not giving away the exact secret sauce. But the practical takeaway is straightforward: our detector is getting better at looking inside the song, not just at it.

Expanded signal diversity for harder cases

One of the biggest weaknesses in basic AI song detectors is overconfidence.

A lot of tools make a snap judgment from too little evidence. That is dangerous. It can mislead artists, frustrate users, and create false certainty in situations that are actually nuanced.

This update pushes our detector in the opposite direction.

We have expanded the diversity of signals the system can consider so that it is less dependent on a narrow pattern family and better equipped for harder cases. That includes cases where:

- a human-made track is highly processed,

- an AI-generated track has been edited heavily after generation,

- the vocals are subtle or intermittent,

- the rhythmic structure is unusually rigid,

- the mix quality masks surface artifacts,

- or the suspicious elements only become obvious when several weaker clues are combined.

This broader signal diversity makes the platform more useful to real users because real-world audio is messy, especially when creators are using AI-powered tools to remove backing vocals and reshape mixes. Users may be surprised by the results of AI music detection, especially when tracks have been heavily processed or are hybrids, as detection is not always reliable in these cases. Detection should reflect that reality.

Our goal is not to sensationalize. It is to give the community a stronger, more grounded signal when the question matters.

Faster parallel analysis under the hood

This release is not only about detection quality. It is also a major performance and infrastructure update.

We have improved the way the system handles concurrent work so it can better support multiple analysis jobs without becoming unstable or sluggish. That matters because good detection is not just about intelligence. It is also about user experience. If people upload tracks and the queue becomes unreliable, the value of the tool collapses no matter how smart the models are.

The updated version is designed to make better use of modern compute resources, process heavier workloads more effectively, and keep the pipeline moving with greater consistency.

In practical terms, that means:

- better job orchestration,

- more robust multiprocessing behavior,

- improved parallel worker execution,

- more efficient handling of CPU-heavy analysis tasks,

- cleaner separation between different parts of the pipeline,

- and more dependable operation when multiple users are submitting tracks.

This helps the community in a very direct way. Faster and steadier analysis means less waiting, fewer pipeline bottlenecks, and a platform that is better prepared for real demand.

When you are trying to check a suspicious track quickly — whether for a dispute, a release review, or simple peace of mind — speed matters.

Better queue handling and job lifecycle management

We also put a lot of work into improving the job system around the detector.

That may sound boring compared with model updates, but it matters more than people think.

A detection tool is not just a model. It is a full workflow: upload, queue, process, inspect, return results, store analytics, and handle failures cleanly if something goes wrong. If any of that breaks under load, users lose trust fast.

The new version improves several parts of that lifecycle:

- clearer queued, processing, completed, and failed states,

- safer task handling in the background worker,

- more reliable cleanup after jobs finish,

- better support for concurrent submissions,

- and more stable behavior when different system components are running at once.

This means the detector is not only smarter at the analytical level, but also more professional as a service.

That is a big deal for community trust. Nobody wants a detector that feels random, stuck, or brittle. People want a tool that behaves like a product they can actually rely on.

More transparent result structure

Another improvement we are happy about is better result transparency.

We are not publishing the private fusion formula, but we are giving the platform a more informative internal result structure so users can benefit from richer analysis output over time. Instead of reducing everything to a flat binary-style read, the upgraded system is designed around a verdict plus a broader family of supporting signal scores.

That matters because AI music detection is not a coin flip.

Users increasingly want to know not just what the verdict is, but why the system leaned that way. They want to understand whether a track showed suspicious rhythmic behavior, unusual vocal traits, provenance clues, structural consistency, or other signs that contributed to the outcome.

We believe the future of trustworthy detection is not blind certainty. It is better transparency without exposing exploit instructions.

This release moves us further in that direction.

Better handling of consensus and conflict between signals

One of the smartest parts of the new engine is how it deals with agreement and disagreement across its internal signals.

Not every track is cleanly one thing or the other.

Some tracks may contain one very strong suspicious marker but several neutral ones. Others may show a broad cluster of moderate suspicious indicators. Some may look questionable at first glance but have deeper characteristics that argue for a human-made origin. Good detection systems need a way to navigate that tension.

Our upgraded fusion layer does a better job of responding to:

- cases where multiple suspicious signals align,

- cases where one strong “human-like” signal should limit overconfidence,

- and cases where provenance or authenticity clues deserve special consideration.

This is one of the key reasons the update matters. It makes the detector more mature. It moves the system closer to reasoned analysis and further away from cheap pattern matching.

Smarter use of audio classification without becoming dependent on it

We have also strengthened the role of model-backed audio classification in the detector, much like modern AI mastering systems in 2025 that blend signal processing with learned models.

This does not mean we have turned the product into a black box that blindly trusts one neural model. Quite the opposite. We still believe single-model answers are not enough for a problem this tricky. But a well-integrated classification layer can be extremely useful when it is used as one signal among many.

That is how we approach it.

The new version improves the way model-driven judgments fit into the broader detection workflow. It supports stronger analysis of full-song characteristics while still being balanced by forensic, structural, rhythmic, and provenance-aware evidence.

For the community, that means the detector is becoming more capable without becoming simplistic.

Better support for artist protection and dispute workflows

A huge reason we built these upgrades is to better support the people caught in the middle of AI accusations.

That includes:

- artists falsely accused of using AI,

- producers trying to defend original work,

- labels reviewing questionable submissions,

- managers investigating suspicious demos,

- curators screening uploads,

- rights holders assessing content risk,

- and remixers who need a credible signal before a disagreement gets out of hand.

An AI music detector should not inflame confusion. It should help reduce it.

This update makes the tool more useful in that role because it is built to review more evidence, operate more consistently, and surface a stronger overall assessment. It will not replace legal review. It will not replace human listening. It will not solve every dispute automatically. But it can help people make better decisions earlier, which is often where the real value lies.

Better analytics for the platform itself

We have also improved how the system stores and tracks analysis outcomes internally.

That matters for two reasons.

First, better analytics help us improve the detector over time. We can study aggregate behavior, understand how the engine performs across a growing pool of uploads, and refine future releases based on real usage patterns.

Second, better analytics help us give the community a richer sense of what the platform is seeing overall. As the AI music landscape keeps evolving, it becomes more useful to understand broader patterns across processed tracks, suspicious uploads, and the shifting line between human-made and AI-assisted work.

We are building toward a detector that does not just score one file at a time, but becomes more informed as part of a larger ecosystem of authenticity signals.

Built for scale without losing the mission

A lot of AI tools lose the plot when they grow. They focus on throughput and forget purpose.

We are trying to do the opposite.

Yes, this update includes serious infrastructure improvements. Yes, it is more scalable. Yes, it handles more parallel work. Yes, it is better designed for real usage. But all of that only matters because the mission matters.

The mission is to help the music community navigate a messy new reality with more clarity.

We want artists to have a stronger way to defend themselves against lazy accusations.

We want rights holders to have a more credible first-pass review tool.

We want labels and curators to have something smarter than guesswork.

We want suspicious uploads to be easier to investigate.

We want creators to understand that authenticity now needs better tooling around it.

And we want to do all of that without pretending we have invented magic. We have not. This is hard. Detection will keep evolving because generation will keep evolving too. But this release is a real step forward.

What we are not doing

It is also worth saying clearly what this update is not.

We are not publishing the exact weighting system.

We are not revealing our decision thresholds.

We are not disclosing every feature family, every fallback rule, or every internal confidence adjustment.

We are not turning the detector into an exploit manual for people trying to evade scrutiny.

And we are not claiming infallibility.

That balance matters. Transparency is good. Naivety is not.

The right way to build trust in a detector like this is to explain the philosophy, the improvements, and the user value — while protecting the internal logic that gives the system its edge and helps prevent gaming.

Where this goes next

This update is a major one, but it is not the finish line.

AI music generation is evolving fast. Detection has to evolve with it. That means we will keep improving our analysis stack, keep refining the blend between forensic signals and model-backed review, keep exploring provenance standards, keep strengthening stability and scale, and keep listening to how artists, labels, managers, and rights holders are actually using the tool.

The community needs more than hot takes right now. It needs better systems.

That is what we are building.

Our goal is to make the AI Music Detector a serious utility for a serious problem: a tool that helps creators protect their work, helps professionals investigate suspicious tracks, helps reduce noise in heated debates, and gives the music world a more intelligent way to ask the authenticity question.

This new release gets us closer.

Final thoughts

If you have used our detector before, this update should feel more mature in all the ways that matter: smarter, broader, faster, steadier, and more useful.

If you are new to the platform, this is a strong time to try it.

The detector now brings together a richer analysis framework, improved concurrency, stronger queue handling, more robust result delivery, provenance-aware review, expanded signal diversity, better stem-aware inspection, and a more thoughtful fusion layer that is designed to handle the messy reality of modern music.

That is not hype. That is the work.

We know how sensitive this space is. We know a result can affect trust, reputation, release decisions, and difficult conversations. That is exactly why we take the product seriously.

This update is for the artists trying to protect real work.

It is for the producers trying to prove a point with evidence instead of arguments.

It is for the labels and curators trying to review tracks more responsibly.

It is for the broader music community trying to adapt to a world where the line between human creation, AI generation, and hybrid production is getting harder to read by ear alone. The subtle differences between AI-generated music and human-created music can be significant, but often require attentive listening and technical analysis to identify, making reliable distinction an ongoing challenge.

And it is for everyone who believes that better tools can make this conversation less chaotic and more grounded.

We are proud of what this release adds.

There is more to come.

But this version of the AI Music Detector is stronger, sharper, and more ready for the moment than anything we have released before. Our detector can also analyze music within videos and content from platforms like YouTube, though keep in mind that low-quality audio sources may affect detection accuracy.

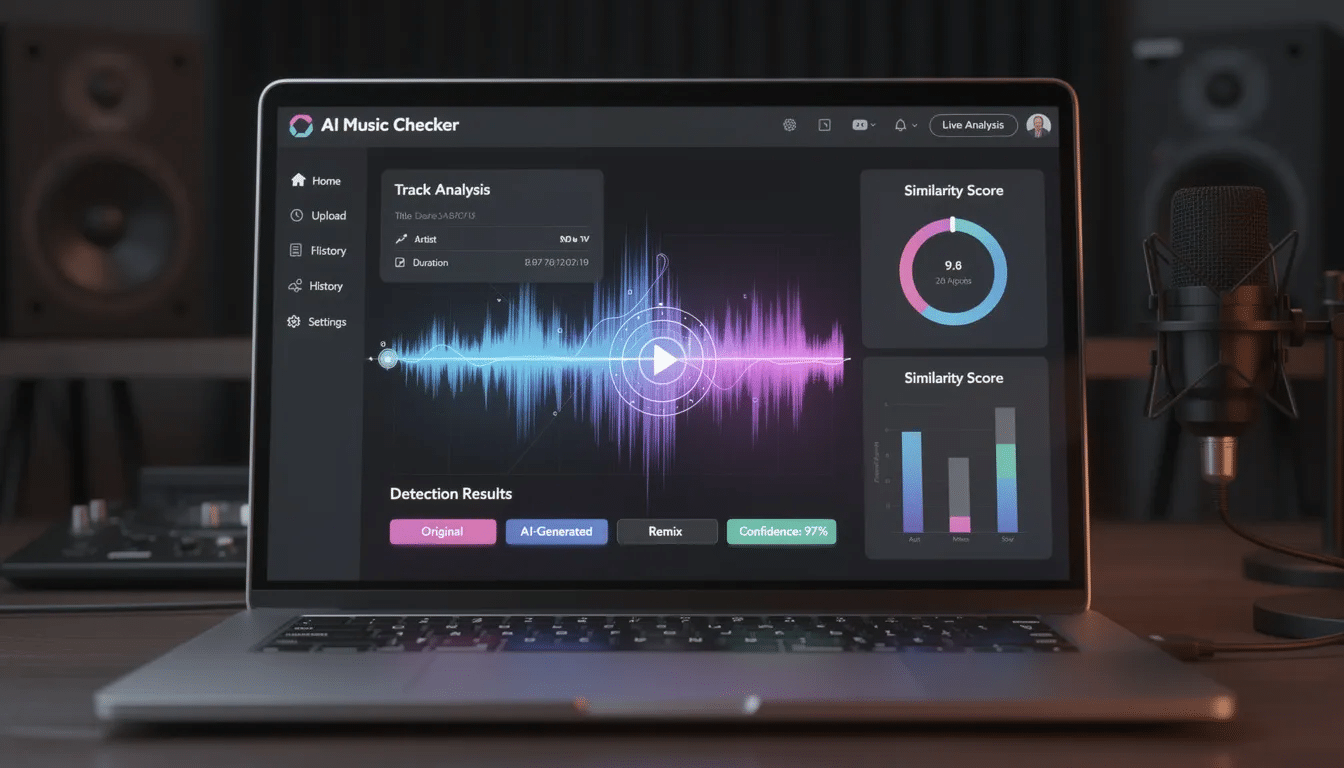

The professional standard for AI Music Detection. We analyze spectral artifacts, groove quantization, and phase coherence to distinguish human artistry from deepfake synthesis.

Launch Detector

Drop Audio File (.mp3, .wav, .m4a)

Secure Analysis Mode

Music Generators and Detection

The explosion of AI-generated music has introduced a new wave of creativity—and complexity—into the music industry. Today’s music generators, such as Amper Music, AIVA, and Jukedeck, empower users to create entire songs with just a few clicks, making music production more accessible than ever. However, this ease of creation also brings new challenges for authenticity and copyright, as AI-generated songs can closely mimic the sound and structure of human-made tracks.

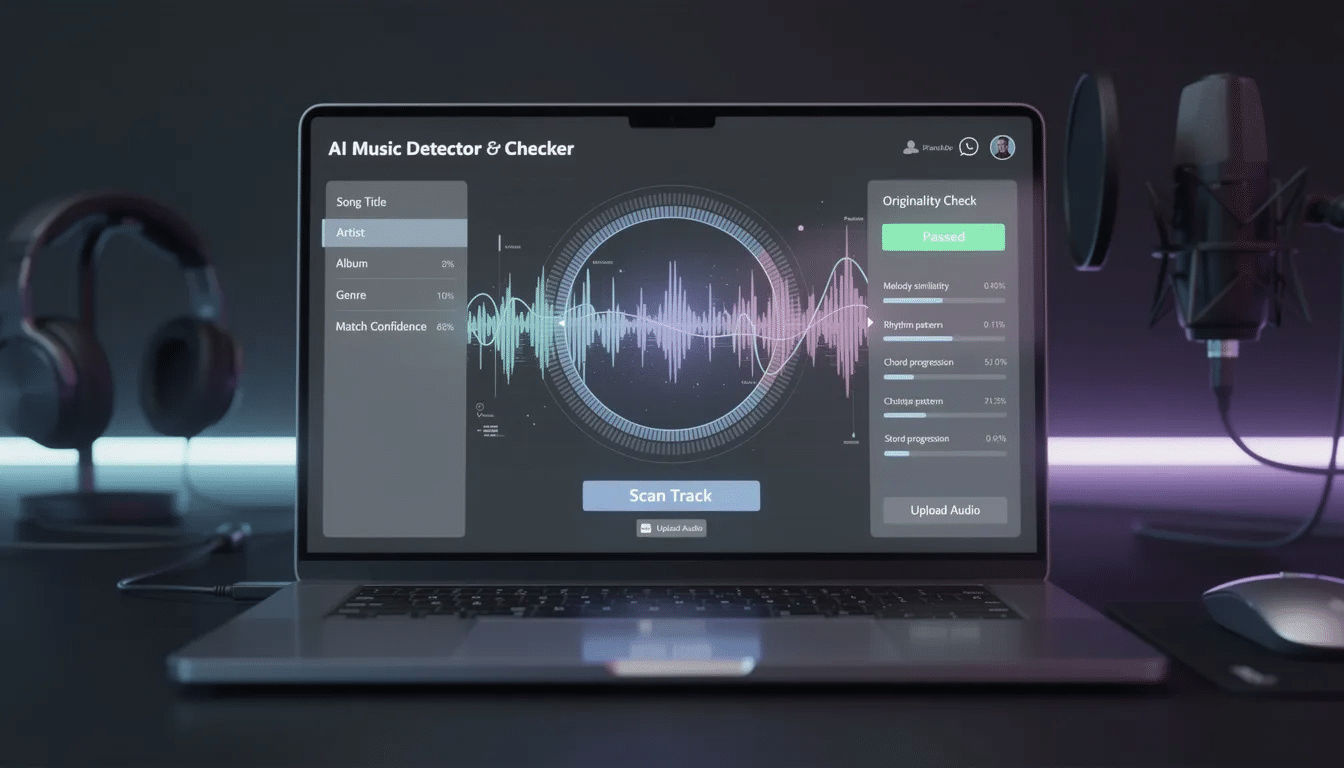

AI music detection tools, like the AI Music Detector, are designed to meet this challenge head-on. By analyzing intricate audio features—such as spectral fingerprints, rhythmic patterns, and subtle production cues—these tools can determine whether a song is AI-generated or crafted by a human artist. This process involves advanced audio forensics that go beyond surface-level listening, allowing rights holders, streaming platforms, and industry professionals to detect AI-generated music with greater confidence.

As AI music generators continue to evolve, the music industry is increasingly relying on robust music detection solutions to manage and verify content. Streaming platforms are using these tools to screen uploads, while rights holders depend on them to protect their catalogs and ensure proper attribution. For users, the ability to analyze and detect AI-generated music is essential for maintaining authenticity and trust in a rapidly changing musical landscape.

Support and Resources

Navigating the world of AI music detection is easier when you have the right support and resources at your fingertips. Leading providers of AI music detection tools offer a range of services to help users get the most out of their platforms. Comprehensive documentation and step-by-step tutorials guide users through everything from uploading audio files to interpreting detailed analysis results. Whether you’re new to music detection or an experienced producer, these resources make it simple to analyze tracks and understand the findings.

For those looking to integrate AI music detection into their own services or apps, many platforms provide APIs and developer guides, making it straightforward to add music detection capabilities to your workflow. Community forums and user groups offer a space to ask questions, share experiences, and connect with others who are passionate about AI music and audio analysis. For example, the AI Music Detector includes a dedicated support hub where users can find answers, troubleshoot issues, and stay up to date with the latest features. This strong support network ensures that everyone—from independent artists to industry professionals—can confidently use AI music detection tools to analyze, detect, and protect their music.

Getting Started

Getting started with AI music detection is designed to be as seamless as possible, even for first-time users. Most platforms, including the AI Music Detector, offer a straightforward process: simply upload your audio file—whether it’s an MP3, WAV, or M4A—or paste a track URL to begin the analysis. The intuitive interface guides you through each step, making it easy to detect AI-generated music without any technical hurdles.

Once your song or audio file is uploaded, select the detection option and let the tool analyze the audio features. In just moments, you’ll receive a detailed report indicating whether the track is likely AI-generated or human-made. The results are presented clearly, helping you understand the key factors behind the determination. For those who want to dive deeper, additional resources such as tutorials and documentation are available to help you interpret results and make informed decisions. Whether you’re an artist, producer, or rights holder, AI music detection tools make it simple to upload, analyze, and detect AI-generated music with confidence.

SEO Optimization and Best Practices

To ensure that AI music detection tools reach the widest possible audience, it’s essential to follow SEO optimization and best practices. By strategically using relevant keywords—such as “AI generated music,” “AI music detection,” “detect AI generated music,” and “audio file”—providers can improve their search engine rankings and make their tools more discoverable to users across the music industry.

Accessibility and user-friendliness are also key. A well-designed, intuitive interface encourages users to engage with the tool, while clear documentation and support resources help them get the most out of every feature. Incorporating long-tail keywords like “AI generated music detection tools” and “AI music detection software” can further target specific search queries, attracting independent artists, rights holders, and industry professionals looking for reliable music detection solutions.

By combining strong SEO strategies with a focus on accessibility and user experience, providers can ensure their AI music detection tools are easy to find, easy to use, and trusted by the music community. This approach not only drives more traffic but also supports the broader mission of maintaining authenticity and integrity in the evolving world of AI-generated music.

How Our AI Music Detector Works

Unlike simple classifiers, uses a Multi-Modal Forensic Ensemble. We deconstruct the audio signal into physics-based components to verify its origin. Analyzing the frequency spectrum is crucial for identifying unique watermarks or frequency patterns that can distinguish AI-generated music from human-created tracks. AI music detection technology also analyzes audio to identify songs, genres, moods, and patterns.

Spectral Artifact Analysis

Generative models like Suno and Udio often leave microscopic grid-like patterns in the frequency domain. Our AI Music Detector performs Cepstral Analysis to calculate the Peak-to-Noise Ratio (PNR) of these artifacts.

Φ_fourier ∝ max(C[q]) / E[C[q]]

Phase Physics & Entropy

This is our “Safety Valve.” Organic recordings have complex phase relationships. AI models often generate “naive” phase information with anomalously low entropy. If the physics don’t match reality, we flag it.

H(f) = -∑ p(f_k) log p(f_k)

Rhythmic Quantization

Human drummers have micro-deviations (groove). AI models tend to snap transients to a perfect mathematical grid. We analyze the variance of Inter-Beat Intervals to detect hyper-quantization.

Heuristic Gating Logic

Our AI Music Detector uses non-linear gating. Even if a song sounds perfect, if the phase entropy indicates synthetic generation, our “Veto” system prevents false negatives.

Introduction to Music Detection

The rapid advancement of artificial intelligence has transformed the music industry, ushering in a new era of AI-generated music that often blurs the line between human and machine creativity. As AI music generators become more sophisticated, it’s increasingly challenging to distinguish between tracks crafted by artists and those produced by algorithms. This is where AI music detection comes into play. By analyzing intricate audio features within a song or audio file, AI music detection tools can determine whether a piece of music is AI-generated or the product of human artistry. These tools are essential for rights holders, streaming platforms, and music enthusiasts who want to ensure authenticity and maintain the integrity of the music industry. As AI-generated music becomes more prevalent, the ability to detect AI-generated music is not just a technical challenge—it’s a necessity for anyone invested in the future of music.

Training Data for Detectors

The effectiveness of any AI music detection system hinges on the quality and diversity of its training data. To accurately detect AI-generated music, detectors must be trained on a comprehensive dataset that spans a wide array of genres, production styles, and audio formats, including WAV, MP3, and FLAC. This dataset should feature both AI-generated and human-created tracks, allowing the system to learn the subtle differences in sound, structure, and production techniques. Regularly updating the training data is crucial, as music generators and AI models continue to evolve, introducing new patterns and audio features. By leveraging high-quality, diverse training data, AI music detection tools can more reliably determine the origin of a song, minimizing false positives and ensuring that both artists and listeners can trust the results.

AI Music and Copyright

The intersection of AI-generated music and copyright law presents new challenges for the music industry. As AI music becomes more widespread, it’s vital to protect the rights of human creators and ensure that AI-generated content is properly attributed, especially in scenes like hip-hop where AI and royalty-free instrumentals are reshaping the genre’s future. AI music detection plays a key role in this process by helping rights holders and streaming platforms identify tracks that may infringe on existing copyrights or misrepresent their origins. By using advanced music detection services, the industry can uphold standards of authenticity and creativity, ensuring that human contributions are recognized and rewarded. This not only safeguards the interests of artists but also helps streaming platforms and music services maintain compliance with copyright regulations, fostering a fair and innovative environment for all.

Music Industry Applications

AI music detection is rapidly becoming an indispensable tool across the music industry, sitting alongside the best AI tools for rappers and music producers as part of a modern creative and technical toolkit. Streaming platforms and music services use these detectors to screen uploaded audio files, ensuring compliance with their policies on AI-generated music and protecting their catalogs from unauthorized content. Record labels and publishers rely on AI music detection to verify the authenticity of submitted tracks, safeguarding artists’ unique styles from imitation by AI music generators and complementing the AI tools now shaping hip-hop production workflows. Royalty collection organizations utilize these tools to accurately attribute works and distribute royalties, ensuring that both human and AI-generated songs are properly accounted for. Even music competitions and awards are turning to AI music detection to confirm that entries meet requirements for human composition and performance, preserving the integrity of their events. In every corner of the industry, AI music detection is helping to maintain authenticity and support the creative community.

Future of Music Detection

Looking ahead, the future of music detection is set to be shaped by ongoing advancements in artificial intelligence and audio forensics. As AI music generators continue to push creative boundaries, AI music detection tools must evolve in tandem, employing ever more sophisticated techniques to detect AI-generated music. The integration of AI music detection with emerging technologies like blockchain and digital watermarking could offer new ways to protect intellectual property and verify authenticity across streaming platforms and music services. With these innovations, musicians, artists, and fans will have greater confidence in the music they create, share, and enjoy. As AI-generated music becomes more accessible, the ability to detect AI-generated tracks will be essential for preserving human creativity, ensuring fair recognition, and fostering a vibrant, authentic music industry for the future.